March 24, 2026

Part 1: AI Is Locked and Loaded to Go on a Roll

Take a minute to think about a recent conversation you had - perhaps at a dinner party - when you were asked a question on a topic you cared about or knew a lot about. Because you had already given that subject considerable thought, you may have been locked and loaded, ready to deliver a beautiful, long, and coherent answer. As you spoke, each sentence flowed naturally into the next. Each word, each conjugation, seemed to appear without conscious effort. You were on a roll!

In such moments, you are not deeply planning each sentence in advance, nor carefully selecting every word before it comes out. Most often, you are not constructing your argument through a deliberate logical progression before you begin speaking. Instead, each sentence emerges fluidly. Without thinking explicitly about each word, your brain’s neural network draws on years of accumulated knowledge and on grammar rules drilled into your head since kindergarten, allowing you to produce coherent speech almost automatically.

This is a useful analogy for how the current generation of generative AI works.

Large language models (LLMs) such as ChatGPT, Gemini and Claude also generate their responses one word at a time in a similar progression. To enable this, their creators - leading LLM companies like Anthropic, Google, and OpenAI - spend enormous sums training each version of their models. During training, grammar rules, sentence structures, and conceptual associations are implicitly encoded into the LLM’s neural network as weights: parameters that determine how likely each word is to follow a given sequence of words.

Once this long and expensive training phase is complete, LLM companies can release the model to the public because, in effect, it is locked and loaded. When you ask it a question, it is ready to string together the next word and the next sentence based on those probabilities, producing a coherent response without explicitly “thinking” about each step. In technical jargon, this is called autoregressive generation. In practice, we see that the LLM, too, is on a roll!

This analogy also sheds light on how LLMs can hallucinate - those moments when a model suddenly goes off the rails and starts making things up. Think back to a time when you were on a roll, talking fluidly about a subject, and then made a verbal gaffe: saying something you really shouldn’t have said simply because your brain associated a particular idea or phrase with the conversation at hand. If you had paused to think more carefully, you likely would have caught yourself - but in the moment, the words just came out, embarrassing you, simply because they were associated with one another in your brain.

Similarly, LLMs do not inherently evaluate whether the content they are generating is logically correct or factually grounded. When they hallucinate, they are often just following probabilistic associations learned during training, pushed along by the momentum of their own output. In other words, because the LLM is using a probability table to come up with every word, each time you ask it a question, its answer will be slightly different. So, there is always a chance that sometimes, the next word or sentence it chooses is one of the less probable ones, perhaps an awkward one, like a gaffe or a falsehood. What’s worse, once they state that gaffe or falsehood, the LLM may continue generating sentences associated with that awkwardly improbable sentence, sliding down a slippery slope of extended hallucinations.

If you have used LLMs regularly over the past year or two, however, you may have noticed that these hallucinations are becoming less frequent - especially in the most recent generations of so-called reasoning models, which have been trained to check themselves. One way humans reduce gaffes is by becoming more deliberate: performing a quick reality check on what we are about to say and stopping ourselves before we articulate something incorrect or embarrassing.

Recent LLM systems are trained to approximate this behavior by incorporating mechanisms that encourage similarly deliberate reasoning. They examine their internal chain-of-thought for errors and embarrassing derivations before committing to a final answer. As a result, these models increasingly behave less like unfiltered dinner-party guests and more like careful participants in measured conversations or business negotiations.

Another way humans improve the quality of their arguments is through preparation. Before an important meeting or a high-school debate, we study the subject, rehearse responses, and do dry runs to learn from our mistakes. With sufficient preparation, we can often respond coherently even to questions we did not explicitly anticipate. A good education and broad exposure to knowledge make this possible.

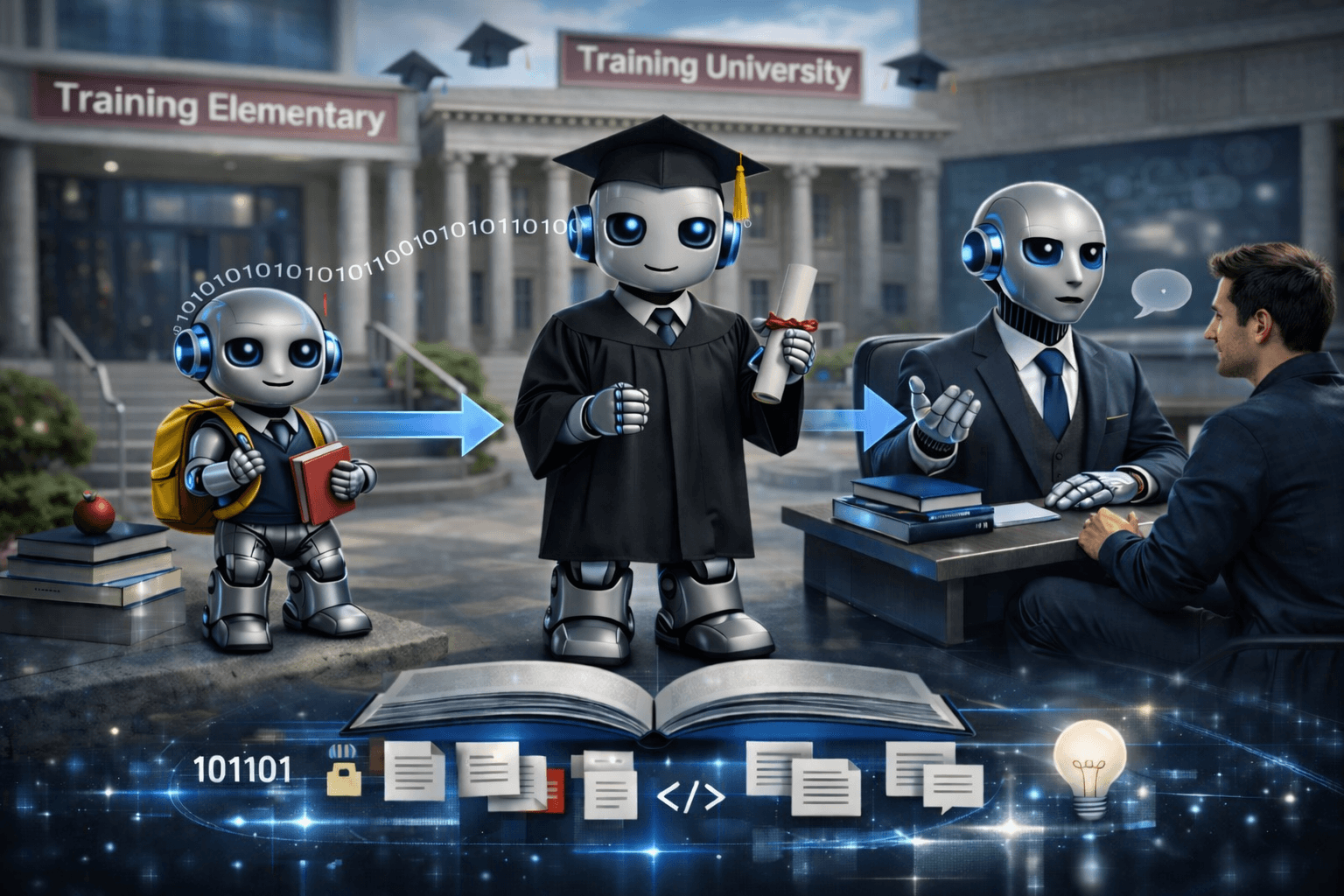

The training of large language models has proven to follow a similar pattern - except that LLMs are not preparing for a single debate or meeting. They are being trained to respond to billions of queries across virtually every subject imaginable, often from users actively trying to probe their weaknesses. One of the most fascinating observations of LLM researchers is that the more knowledge an LLM possesses, the more capable it appears to generalize that knowledge, and to apply it to areas it was not even specifically trained on.

Just as teaching children a broad curriculum - from math and science to literature and the arts - prepares them to reason more effectively, LLMs seem to benefit from broad training even when tackling narrow tasks.

This insight - that scaling data, compute, and model size could dramatically improve performance - was not obvious at first and surprised even leading researchers. Scientists such as Dario Amodei, now the founder and CEO of Anthropic, began exploring these scaling laws around a decade ago while on early language models at OpenAI. As models were trained on increasingly large datasets, often encompassing much of the publicly accessible internet, their capabilities continued to improve. When high-quality human-generated data became scarce, researchers supplemented it with synthetic data and increasingly sophisticated training techniques.

Throughout this process, skeptics - including many AI researchers - repeatedly questioned whether continued scaling would yield meaningful gains. Thus far, scaling has consistently delivered improvements that users experience firsthand when interacting with modern LLMs, even as debates continue about how long this trend can persist.

Leaders in the field, including Google Gemini’s Demis Hassabis, openly acknowledge that gains from additional compute are showing diminishing returns. Amodei himself does not rule out the possibility that progress from pure scaling could eventually plateau. At the same time, there is broad consensus among those at the forefront of the technology - including Hassabis and Amodei - that no such hard limit is currently visible and that meaningful improvements are likely to continue for the foreseeable future.

One reason for this optimism is that scaling is no longer just about ingesting more raw data. Leading LLM companies now invest heavily in improving data quality, generating high-quality synthetic data, and building feedback loops that help models distinguish better answers from worse ones. To extend the school analogy, these companies are not just giving their LLM students more books; they are providing better textbooks, writing proprietary materials, and administering increasingly sophisticated exams - many, many exams. Unsurprisingly, the quality of the LLM’s school - and its teachers, has had a significant impact on model performance.

Despite all the talk about ever-larger data centers, it is generally understood that improvements in modern LLMs cannot be attributed to any single breakthrough. In one illustrative experiment, Andrej Karpathy - an early Tesla and OpenAI researcher and one of the sages of the modern LLM wave - re-ran one of the first image-recognition models using the original data and later applied modern algorithms to it. He found that the error rate was roughly halved purely due to improved techniques, before adding any additional data. Only afterward did he further improve performance by giving the model ten times more data.

This experiment reinforces the thesis that progress in model performance has come, and will likely continue to come, from many relatively small innovations across architecture, data curation, optimization, training methods, evaluation, and hardware: incremental advances that compound over time.

How, then, do companies create this steady stream of innovations? How can they keep improving their schools and teachers? Largely by attracting exceptional talent. This explains why competition for elite AI researchers has intensified dramatically, with reports of extraordinarily large compensation packages being offered to recruit top scientists. Yet at the highest levels, financial incentives alone have proven insufficient. Many leading researchers choose environments where they can collaborate with peers they respect and learn from others at the cutting edge. It turns out that what attracts talent most is talent itself.

This dynamic helps explain why organizations such as Google’s Gemini and Anthropic have become powerful talent magnets. It also sheds light on why technology giants like Amazon, Microsoft, and Apple have often chosen partnerships over direct competition by partnering with companies like OpenAI and Anthropic.

In brief, the leadership positions of OpenAI and Anthropic are more defensible than they initially appear. These early AI leaders have a strong first-mover advantage, due to their talent density, the know-how and research they have built on this foundation, the feedback loops their millions of users provide for continuous improvement, the distribution channels they are building, and finally, the efficiency they gain from scale.

This is the first of four posts about the current state of AI. In the next posts, we will delve more deeply into the competitive and strategic dynamics of the industry and the potential threats to the current leaders in this field.

Author:

Image Credit:

ChatGPT - "The Educational Journey of AI"

Disclaimer

The information and opinions contained in this article are for background information and discussion purposes only and do not purport to be full or complete. No information in this article should be construed as providing financial, investment or other professional advice.

The information contained herein is intended for the sole use of the recipient and may not be copied or otherwise distributed or published without the express consent of TOP Venture. Although the information contained herein has been established by TOP Venture based on or by reference to sources, documents and systems it believes to be reliable and accurate, TOP Venture does not guarantee its accuracy or completeness and assumes no responsibility for any losses that may arise from the use of this information. The views and opinions expressed herein are based on current market conditions and are subject to change without notice. No representation is made that any forecast or projection will be realized.